Everyone wants to do DevOps, however, history proves that far from everyone is successful at it. And although there’s nothing bad about failures – at least, that’s what DevOps philosophy advocates, they shouldn’t slip away unnoticed. We decided to delve deep into some statistics and DevOps failure case studies not to point fingers but to get to the bottom of the cause and let you learn from the mistakes of others.

Why DevOps Doesn’t Work

The consequences of DevOps failures might be so extreme that they immediately hit the headlines and are being discussed long afterward. Have you heard about Knight Capital that went bankrupt in 45 minutes because of a failed deployment? To be fair, there are only a handful of stories like that. And just because they are rare we tend to think that it’ll never happen to us. Indeed, there’s a long shot for that. However, DevOps fails not only when the damage is done, but when the organization can’t leverage it in the way it’s supposed to. According to Gartner, by 2023, staggering 90% of DevOps initiatives will have failed to meet expectations. Businesses that don’t want to become part of this statistic need to understand what they’re doing wrong and how to fix it.

Failing to identify the importance of organizational culture

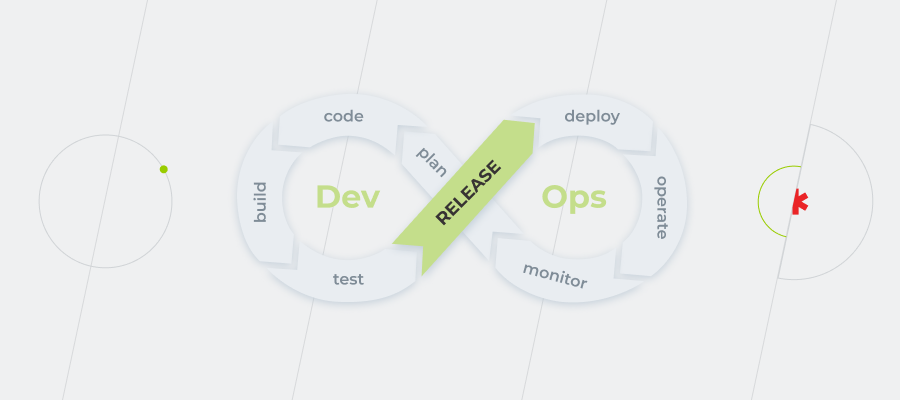

Perhaps, one of the biggest misconceptions about DevOps is to think that it’s only an IT initiative. In fact, the problems organizations need to solve are a combination of culture and technology. Sometimes DevOps is equated with automation or cloud, but it’s so much more than either of those. While delivering a successful DevOps practice without using cloud technology or automating repetitive tasks would be difficult, it doesn’t automatically (pun intended) make you good at DevOps. Instead, good DevOps comes from a cultural shift toward better communication, collaboration, and integration across the company. In addition, companies need to address organizational and team concerns, including helping teams clarify their mission, primary customers, interfaces, and what makes for healthy interactions with others.

In the interview with InfoWorld, Bryan Dawson, DevOps evangelist, shared one of his first experiences in DevOps, which resulted in the failed application release. Working as a consultant for a U.S. government agency, he took part in the deployment of a new supportive DevOps platform, which was supposed to help with planning, coding, building, and releasing the app. At first, the project seemed promising, but soon it became clear that tooling alone is not enough to succeed. Being focused on tools, the team lost sight of the people and processes and literally supported legacy practices with modern instruments.

So one of the biggest blockers for the organizations to use DevOps to its full potential is failure to create an appropriate culture. As cheesy as it may sound, the best results are achieved when we start viewing DevOps as a cultural paradigm. However, simply talking about culture won’t help if it doesn’t evolve into certain actions. DevOps is a verb – it’s not something you have, it’s something you do.

According to Puppet State of DevOps 2021 Report, DevOps really works out when the leadership makes it a priority. In terms of DevOps evolutionary levels, 60% of highly evolved organizations say that the top management actively promotes DevOps. It’s both top-down and bottom-up work: the practices are set from above and are supported by the whole staff.

Apart from passive leadership, other cultural reasons for companies to be stuck in a rut with DevOps are risk management practices of infrequent deployments, unclear responsibilities, and limited knowledge sharing. So what can be done to change that?

Too many organizations, when seeking cultural change, focus too much on these surface elements—add a foosball table and a few bean bags in the office and suddenly everyone will start acting like we’re an innovative start-up, right? That’s not the way it works.

— Stephen Thair, CTO, DevOpsGroup

You may start with the following:

- Change leadership behavior at every level by generating meaningful conversations with your team and enabling them to understand why the status quo is no longer good enough.

- Hire new people with new ideas, for whom agile techniques is not an empty phrase.

- Think about what you can do to nudge your staff in the right direction, such as rewarding the behaviors that move the company forward or challenging ones that don’t align with the direction you are trying to go.

- Introduce agile ways of working, like Scrum or Kanban, to visualize workflow and speed up business value delivery. You may also try to apply the idea of “pair programming” to the areas besides programming – and if pairs are chosen right, not only will the quality of work increase, but also interpersonal skills will develop significantly and knowledge sharing will be established.

Trying to do a new thing in the old ways

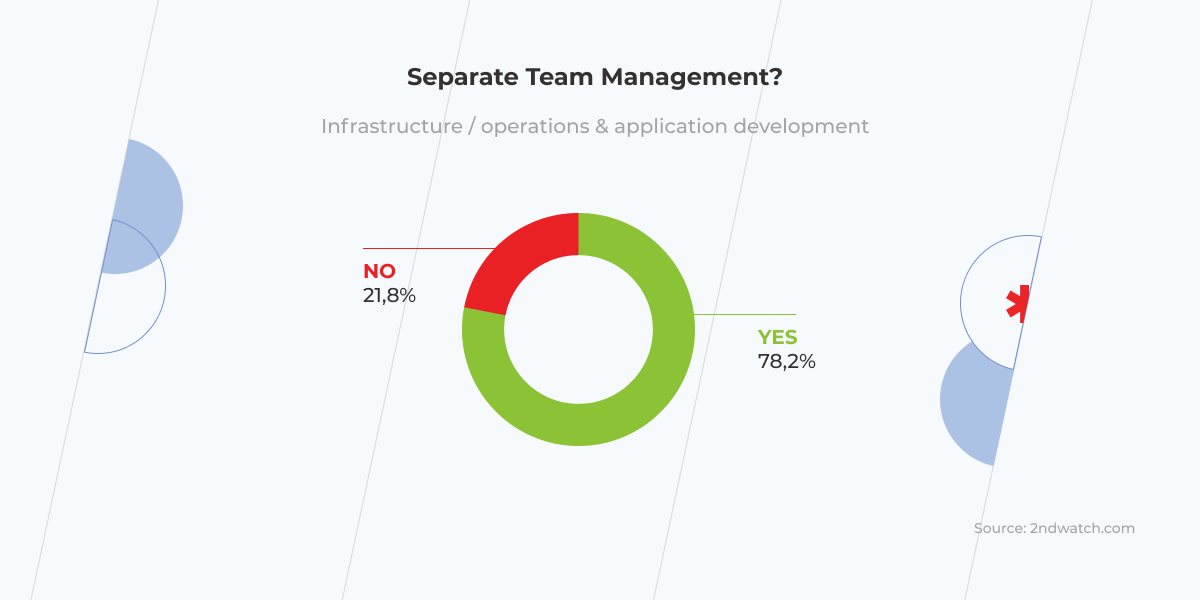

2nd Watch’s DevOps survey found that just 22% of organizations are engaged in DevOps in its purest form, while 78% of organizations, which are supposed to be doing DevOps, continue having separate management for infrastructure/operations and engineering teams. However, DevOps can’t exist without a truly collaborative environment, which breaks the silos across the whole organization. All the stakeholders, including business people, developers, operations teams, security teams, and QA must be engaged in creating the product and in getting it out of the door.

Another thing the survey revealed is that more than a third of the respondents manage infrastructure manually. Besides contradicting the DevOps philosophy, this approach increases the risk of human errors, leading to wasted time that could, instead, be spent on generating compelling ideas. It also undermines the work of sysadmins turning them into angry burned-out folks ready to blow it all up. When infrastructure expands, the process slows down even more, while the risks of errors skyrockets. Moreover, companies can miss out on the most useful practices, like well-organized scaling. For instance, for an online store at night, when there are few if any customers, one server might be enough, otherwise the price for cloud infrastructure that you don’t even need will arise. Meanwhile, with an automatic approach, your cloud infrastructure will scale down by default whenever it’s needed.

One more blast from the past that holds Devops back in at least a quarter of companies is having little or no code testing processes in place. In today’s competitive market, where development and release cycles get shorter, winning the race of continuous delivery is impossible without making continuous testing an integral part of CI/CD pipelines. If testing isn’t run properly, it won’t be long before application crashes and customer service issues occur. As it was in the case with an already mentioned U.S. government agency, launching a web application. Right after the application was released it experienced critical and very public failures. That’s because it hadn’t been properly tested during the delivery process. It took the tech team multiple weeks to deal with the issue and get the site operational. Can you imagine how much cheaper it would have been for the agency if the bugs had been identified at an early stage of development?

To accelerate release cycles and, at the same time, ensure error-free outputs, testing must stop being a segregated stage at the end of delivery, but become an integral DevOps activity that covers development, integration, pre-release, production, delivery, and deployment.

The legacy of ‘legacy’

In this year’s State of DevOps survey, Puppet found out that for 28% of respondents, legacy architecture is one of the main barriers to better DevOps practiсes.

These legacy systems, they’re just like these hairballs that the cat coughed up.

— Charity Majors, CTO and Co-founder, Honeycomb.io

Working on things designed decades ago is arduous. Fortunately, they still can take advantage of modern practices and a pinch of agility. Sometimes simply moving an application into a virtualized environment allows for better test coverage, which enables faster and more confident changes.

Anyway, ‘leave it alone’ attitude only grows the gap between the current state of the organization and its future improvement, and paves the way for DevOps failures. As tough as modernization might be, the survival of the companies that were not born digital depends on it. But labeling your organizational dynamics problem as ‘legacy’ without identifying the specific issues is far from being useful. To make progress in modernizing obsolete systems, you need to analyze them, sort them into easily understood categories, and set explicit goals and action plans.

Automation is a double-edged sword

Anything that you do more than twice has to be automated.

— Adam Stone, CEO, D-Tools

Automation is key in the DevOps movement. There’s a lot of work, like installing packages, building docker containers, monitoring, logging, alerting, etc., that just shouldn’t be made manually, first of all, because they don’t scale, and another thing is that humans are not really good at doing the same things over and over again – that’s what computers are for. ‘So why not leverage them?’ – DevOps adherents ask rhetorically. No reason. Though such a powerful tool as automation must be treated with awe.

The fall of Knight Capital group can serve as a cautionary tale when it comes to automation. Knight was, at one point, the largest US-based equities trader. To send orders to the market for execution, Knight had been using an automated application, known as SMARS, which had many outdated parts in its codebase. Eventually, the company decided that one such part — old, unused code referred to as “Power Peg” — should be replaced. After the new code was written, it unintentionally activated the Power Peg functionality, which was still in the codebase. Because of the system that was sending automated, high-speed orders into the market and wasn’t being tracked, Knight experienced 45 minutes of hell. This time was quite enough for the app to make around 4 million transactions worth 3 billion dollars. Just like that, automation turned into a knightmare, resulting in a $460 million loss and bankruptcy.

Anyone who has used Netflix has probably noticed that some streams (e.g. ‘Trending Now’ or ‘Popular or Netflix’ ) occasionally disappear. This happens because the instance group that serves this stream is down. Meanwhile, the app itself isn’t suffering any deterioration in performance and the company is not losing customers.

Perhaps, the main lesson to be learned from this story is that you should automate not as much as possible, but as much as reasonable. In that case, it would have been reasonable to turn software releases into a repeatable and reliable process by implementing an automated deployment system. Had Knight known that, the fatal error could have been avoided.

Talk to one of our solutions experts to begin your DevOps journey the right way

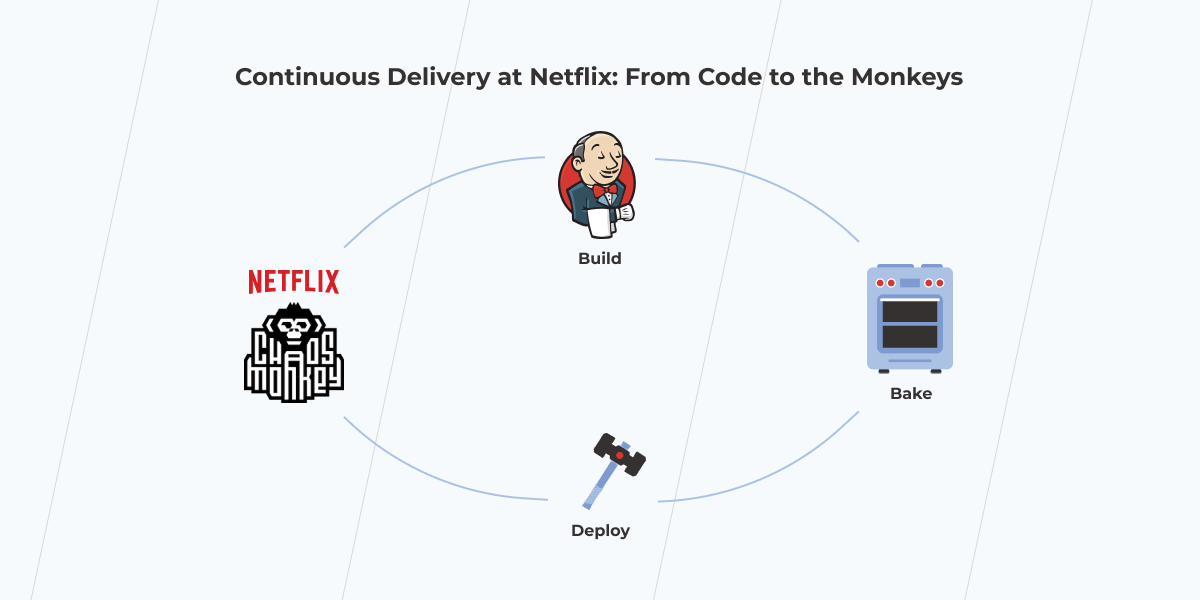

Bonus: Netflix DevOps case study. If you can’t beat failure, automate it

For those who got tired of reading about DevOps failures, here’s an example of Netflix that shows how a fundamental understanding of DevOps can help make failure a friend rather than an enemy.

Netflix is made up of hundreds of microservices hosted on the cloud. To provide uninterrupted video streams for the customers across a wide array of devices, Netflix engineers have to ensure all the components are working together properly. Nonetheless, it’s barely possible to find a system that is 100% reliable. Instead of resisting the obvious, Netflix acted in a truly DevOps style – they accepted that failure was going to happen, planned it in advance, and went even further by automating it.

It’s possible thanks to the Chaos Monkey, a tool invented by Netflix to test the resilience of its infrastructure. The tool randomly shuts down server instance groups to check how remaining systems respond to the outage. The artificially created ‘chaos’ allows developers to better prepare for the real one rather than just waiting for a disaster to strike. Such an approach encourages engineers to design modular, testable, and highly resilient systems from the start.

Get prepared for your DevOps journey

Having a deep understanding of what DevOps is and being ready to make a shift to a new workflow, new mindset, and new culture are essential constituents of success in DevOps. What’s for failure, it’s not something to be terrified of. Despite a number of things that can go wrong you have a great chance to avoid them by planning recovery in advance and learning the lessons your predecessors have taught.